Imagine you were a CAD programmer (if you actually are one, play along with me here.) What problems would you focus your energies on?

From my perspective, persistent bugs and software stability would be a good primary focus. But, let’s limit it to geometric modeling problems. Not just the run of the mill “fix this bug” stuff, but rather the real serious problems.

George Allen, Chief Technologist & Technical Fellow at Siemens PLM Software, wrote an interesting paper for an academic conference, where he talked about what he saw as the big geometric modeling problems in industrial CAD/CAM/CAE software. You can download a copy of the paper at this link.

In short, Allen sees three particularly tough problem areas: filleting, history-based models, and performance.

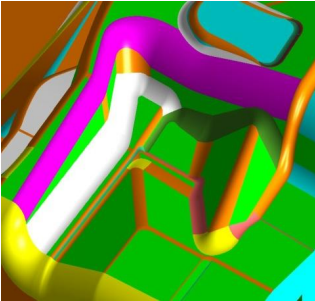

Consider filleting: According to Allen, “the filleting problem is important because it consumes a great deal of modeling time – typically as much as 40% in parts like castings, forgings, and sheet metal stampings.” He points out “the filleting functions in CAD systems are often unpredictable, counter-intuitive, and prone to failure. So, producing the desired results often requires considerable user skill, which means that the task can not be done effectively by low-priced inexperienced workers.”

Consider filleting: According to Allen, “the filleting problem is important because it consumes a great deal of modeling time – typically as much as 40% in parts like castings, forgings, and sheet metal stampings.” He points out “the filleting functions in CAD systems are often unpredictable, counter-intuitive, and prone to failure. So, producing the desired results often requires considerable user skill, which means that the task can not be done effectively by low-priced inexperienced workers.”

The problem with history-based models starts when the replay (or rebuild) process fail—which often happens if parameters (or inputs) are changed substantially from previous ones. “When this happens, the user must ‘debug’ the model. He has to understand the sequence of steps that was used to build it, and find out which of these steps are failing, and why. The process is very similar to the debugging of programs — in fact, in a sense, a history-based model is a program. But, unfortunately, the debugging tools are very primitive compared to those available for debugging programs. As a result, people often just give up and rebuild the model from scratch.”

Allen sees two distinct problems in performance. First is with large models: “Our users are dealing with enormous models. A motor vehicle, for example, will typically have around 30,000 parts, and overall data size is likely to be around 15 or 20 gigabytes. The largest parts are complex castings like the engine block and complex sheet metal parts like the floor pan.” The second problem is less obvious; “that some operations take a few seconds, but users really need the computations to be done in real time (in other words, in around 1/30th of a second). Lack of real-time response makes some exploratory functions unusable, and this impairs user creativity.”

How much performance improvement would be enough? Allen says that “in either case, we need performance that is roughly 100x better than we have today, so clearly small incremental refinements of our current approaches will not be sufficient.”

In the paper, Allen suggests some possible solutions to these problems, but he really leaves things pretty open. The paper was targeted at academic researchers, to point them in the direction of research that would be of real value to developers of commercial CAD/CAM/CAE software—and ultimately, their customers.

Take a few minutes to download and read the paper. You’ll come away with a greater understanding of how challenging it can be to create good CAD software.

I I thought we were doing well with 2,000 parts.

1) Fix the problems. This is a common theme amongst users. Performance will not matter if users have to repeat things previously fixed.

2) Performance would be greatly enhanced by better sw tools for simplifying models. Very few design analyses require the detail of every single one of these 30,000 parts

Fixing the bugs should be top priority. SolidWorks has a huge handicap when it comes to “zero thickness geometry”. Not only does this dictate how the designer approaches certain modeling challenges, it also makes it nearly impossible at times to import models from Pro or Inventor (who both handle ZTG with ease.)

I handle models typically in the 15K to 25K parts range on a regular basis. Performance would certainly help. But it has improved drastically over the years. Careful modeling is key.

Whilst I tend to agree with the key points of this paper I would suggest that a lot more problems arise as a consequence of the annual new product cycles! All the main players strive to bring something new to their product line on an annual basis, occasionally compromising efficient programing in order to meet schedules. During beta testing it is not unusual for

features, known issues or improved functionality to be postponed or compromised in order to meet these deadlines. You only need to look at the number of service packs and hotfixes that follow each product update.

Personally I think it is crazy trying to meet these tight deadlines just to bring a”new product” version to the market. The end user rarely updates annually and the products tend to be bogged down by inefficient programming with no doubt large amounts of redundancy.

The only software to buck this trend was Solidworks; in I believe the 2010 version; that offered little in the way of “new features” but was revamped internally to work more efficiently with some improved functionality to existing features.

The CAD developers really need to move to a 2 year cycle which would benefit both the product lines enormously and the end user. In fact it is quite likely that many of key points of this paper could be resolved as a consequence.

Drifting back onto the main topic; fillets can be problematic and any efforts to improve this is certainly welcome. In the interim user expertise is essential to devising solutions to problematic situations; which is rarely covered in any training regime…perhaps a subject for another day!