Earlier this week, I was doing some software testing on my lab machine. It’s a really nice Z1, on loan to me from HP. It has an 8-core high-end Intel processor. I brought up the process monitor as I worked, and watched, somewhat amused, as Autodesk Inventor pegged one core at 100% for several minutes, while the other cores sat there, doing almost nothing.

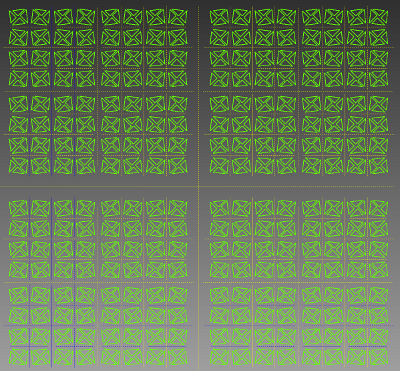

It wasn’t Inventor’s fault. Well, not really. The particular test I was doing was designed to push the 2D sketcher in Inventor to its limit. It contained 1024 triangles, connected with over 3072 constraints (I didn’t count exactly.) That sketcher uses a component called 2D DCM (Dimensional Constraint Manager), part of the D-Cubed group of software components, developed and sold by Siemens PLM Software.

Many well-known CAD programs use D-Cubed software. It’s the stuff that, when you push and pull on a sketch (or a CAD model) figures out what you’re trying to do, and calculates the resulting shape. 2D DCM is often called a “constraint manager,” or a “solver.” Built into its heart are a bunch of very complicated algorithms for solving systems of linear equations. It’s PhD level stuff.

In the case of my testing, it was 2D DCM that used all the power of one core, but ignored the other cores in my computer – essentially, leaving 7/8 of the power that HP built into the computer untapped.

So, here’s the question: Why doesn’t Siemens PLM just tell their programmers to fix 2D DCM, so it can use multiple cores? Why not rewrite it to support concurrency? If they did that, it’d solve a lot of other problems at the same time—for example, it would make creating cloud based CAD systems, that run across multiple processors and servers, a lot easier to implement.

As a start, 2D DCM has been thread-safe since 2009. A CAD system can run multiple instances of the program on parallel processors, without any significant performance hit.

So it does run on multiple cores. Problem solved?

Hardly.

In my test, I’d created a 2D sketch in Inventor. where moving any one node or edge required the system to recalculate all of the lines in all of the triangles. All 1024 of them. There were no independent constraints, where making a change would not affect other geometry. They were all interlinked.

Suppose Autodesk’s programmers had set up Inventor to use multiple instances of 2D DCM on multiple cores. How could a problem such as mine be partitioned to use those multiple instances?

The answer is: it couldn’t. Running 2D DCM on multiple cores allows those multiple instances to solve independent constraints. Not interlinked constraints.

Let me see if I can paint a picture of the problem. When I was a kid, I used to play a game called pick-up sticks. The idea was to dump out a bunch of long sticks on the floor (or table), creating a tangled pile. Each player, in turn, would remove a stick from the pile without disturbing the remaining ones.

Imagine several people playing pick-up sticks, but instead of waiting in turn, all of them trying to remove sticks at the same time. Concurrently. That’s pretty analogous to the problem of partitioning the data in a sketch in a way that it’s possible to use parallel solvers. There’s no easy way of partitioning the equations representing the system of constraints in such a way that they can be solved in parallel.

2D DCM has been around for quite a long time, as CAD component software goes. When it was designed, the programmers likely looked at the issue of parallel computing, shuddered, and decided to focus on making the software actually work right in the first place. It probably made sense at the time: Multicore processors, and even parallel computers, were rare.

Over the years, some things have changed. Multicore, parallel processors, clusters, and cloud computing are now commonplace. And there have been advances in math. Do a search on Google Scholar for “parallel solutions of linear systems” and you’ll get a lot of results. Still, adding parallel support into a tool like 2D DCM isn’t just a matter of writing some lines of code. It might involve tearing it down to the ground, and rebuilding it with a completely new architecture.

Is Siemens willing to invest what would likely be a princely sum in rebuilding their D-Cubed products from the ground up? I can’t answer that question. If I asked the folks at Siemens, and they told me, I’d not be able to tell anyone else. Trade secrets, you know. But I can say that I hope they are looking at this problem, because it’s one of the key limitations that get in the way of developing next-generation high-performance CAD software. The kind that can run on multiple cores, multiple processors, clusters, or the cloud.

I wrote this post in response to a commenter, who was raising the issue of the lack of multicore support in current CAD systems. I think his concern is valid, but I wanted to make the point that this is not a simple problem to fix, whether in geometric constraint managers, or geometric modeling kernels. It’s like the pick-up sticks problem: Really difficult, even if you throw big piles full of money at it, and wave fat paychecks at PhD mathematicians.

Still, there are people working on these problems. Next week, I’ll be writing a bit about Cloud Invent, a tiny company that may have made a breakthrough in geometric constraint modeling.

Pick-up sticks image courtesy David Namaksy, boardgamegeek.com